Artificial intelligence (AI) is transforming the HealthTech landscape. Advanced algorithms are increasingly embedded within medical devices, clinical decision support platforms and digital patient management systems, enabling healthcare organizations to use specialist AI tools to extract insights from vast and complex datasets. As healthcare data grows in scale and complexity, AI helps identify patterns and relationships that would be difficult, or impossible, to detect manually.

Approaches combining machine learning with advanced analytical techniques, including topological data analysis, which looks at the overall shape of data to reveal hidden trends, allow developers to examine relationships across multiple data dimensions. These methods help identify meaningful patient patterns, enabling earlier detection of risk factors and supporting more informed clinical decisions.

However, the effectiveness of AI systems depends on more than performance at initial deployment. Healthcare environments are dynamic with patient populations, clinical practices and data collection methods continuously evolving. As these factors shift, the statistical patterns in clinical data may no longer match the patterns the model learned, potentially impacting predictive reliability. Consequently, medical AI systems must be monitored and managed throughout their lifecycle to remain reliable, clinically relevant and safe.

Deploying AI models in clinical environments

Before an AI model can be deployed in a clinical setting, it must demonstrate reliable performance on representative healthcare data. Developers typically evaluate metrics such as accuracy, precision and recall, while validation studies assess whether predictions remain meaningful across diverse patient cohorts. However, this validation represents only a snapshot in time.

Once deployed, models encounter data that may differ from their training sets, and over time, these shifts can reduce predictive accuracy, a phenomenon known as model degradation. Continuous performance monitoring, regular model reviews and retraining cycles help ensure that AI systems remain accurate and aligned with current clinical realities, supporting trust and safety in real-world healthcare applications.

Understanding data drift

One of the most common causes of declining model performance is data drift, which occurs when the statistical characteristics of input data change over time. In practical terms, this means that the data being fed into the model begins to differ from the data on which it was originally trained.

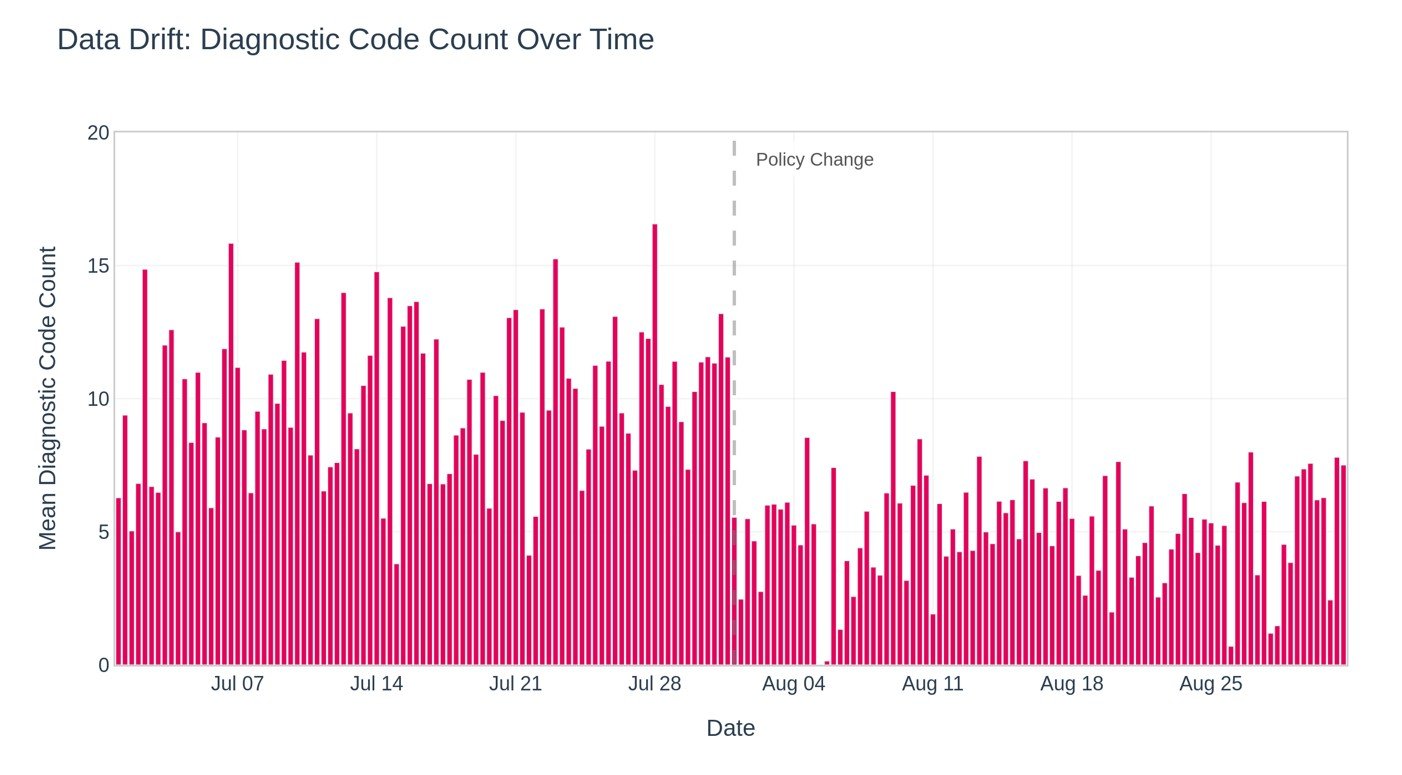

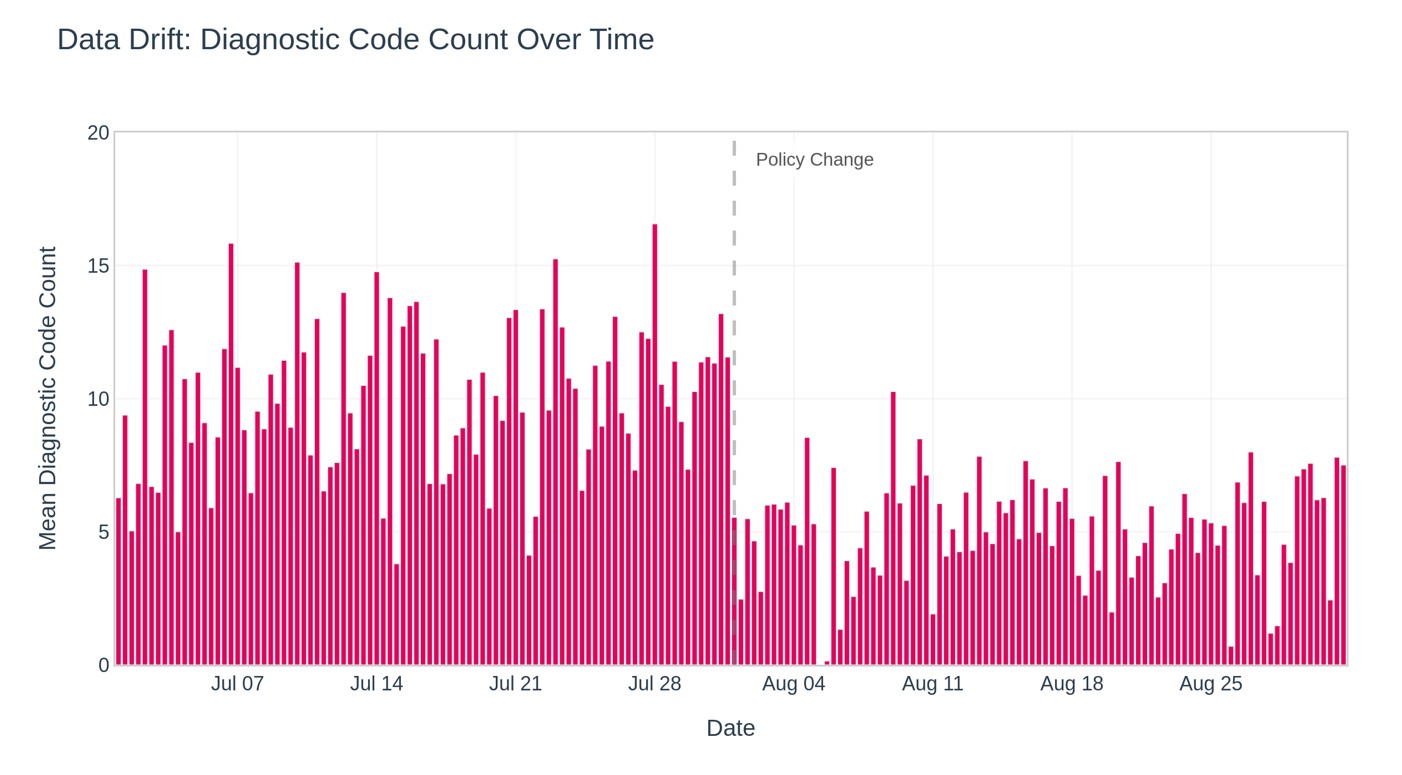

Even subtle changes in data distribution can influence how a model interprets information and generates predictions. For example, consider a predictive model trained using comprehensive diagnostic information captured during general practitioner consultations. If changes in healthcare policy introduce shorter consultation times, clinicians may record fewer secondary diagnoses. As a result, the dataset used by the model becomes less detailed than expected, which can reduce prediction accuracy. This phenomenon is illustrated in Figure 1, showing how a hypothetical policy change can reduce available diagnostic data and affect model performance.

Data drift does not necessarily indicate a problem with the underlying algorithm. Instead, it reflects the reality that healthcare systems evolve. Monitoring these changes allows developers to detect when the input data begins to diverge significantly from the training dataset.

Several analytical techniques can be used to detect these shifts. The Population Stability Index (PSI), divergence metrics and feature distribution analyses are commonly used to detect shifts in the statistical structure of incoming data. When these indicators exceed predefined thresholds, they signal that the model may require further evaluation or retraining to maintain performance.

For organizations working with large-scale healthcare datasets, systematic monitoring of data drift provides an essential safeguard that helps maintain the reliability of AI-driven insights.

Figure 1

Understanding concept drift

While data drift relates to changes in the characteristics of input data, concept drift occurs when the underlying relationships between variables and clinical outcomes evolve. In this case, the data may appear similar, but the real-world meaning of that data has changed.

Healthcare is a field characterized by continuous innovation. New surgical techniques, emerging therapies and updated clinical guidelines can all influence how diseases are diagnosed and treated. As these changes occur, historical data may no longer fully reflect current clinical practices.

For instance, the introduction of a new surgical procedure that significantly reduces complication rates may alter the relationship between certain patient characteristics and predicted outcomes. A model trained on historical data may therefore overestimate risk levels if it has not been updated to incorporate these developments. Figure 2 demonstrates this effect, showing how predictions diverge from actual outcomes following a change in clinical practice.

Managing concept drift requires close collaboration between data scientists and clinical experts. Clinicians play a vital role in identifying when treatment practices or patient care pathways have changed in ways that may affect model predictions.

Interpretability tools can further support this process. Techniques such as SHAP (SHapley Additive exPlanations) allow developers and clinicians to visualize which variables are contributing most strongly to a model’s predictions. These insights help ensure that the reasoning behind AI-generated outputs remains consistent with real-world clinical knowledge.

Figure 2

Managing drift in complex healthcare datasets

As healthcare datasets grow larger and more interconnected, managing model drift requires a blend of technical metrics, contextual analysis and clinical expertise. Advanced methods like topological data analysis enable exploration of dataset structures across multiple dimensions, revealing clusters and relationships that might otherwise remain hidden.

This approach is especially useful for identifying emerging patient subgroups or subtle shifts in treatment patterns that could impact model performance. By analyzing these broader patterns, developers can detect early changes in patient relationships and risk profiles before predictive accuracy suffers.

Risk-adjusted modelling plays a key role in this process, ensuring that variations in patient demographics, health conditions and procedural complexity are considered when interpreting performance changes. Visualizing model predictions and their evolution over time through trend analyses further supports timely detection of drift and informed intervention by both developers and clinicians.

Integrating monitoring into the AI lifecycle

Building and maintaining trust in medical AI requires the integration of monitoring and governance throughout the entire model lifecycle. Development, deployment and evaluation are interconnected steps, not isolated events. Continuous performance monitoring is essential to ensure models remain accurate and relevant. This includes regularly evaluating models with up-to-date clinical data and tracking both predictive performance and indicators of data or concept drift.

Regulatory standards such as ISO 13485 for medical device quality management and ISO 42001 for responsible AI development provide important frameworks for maintaining safe, transparent and accountable AI systems. Equally important is collaboration between clinicians and data scientists. While technical teams build and maintain models, clinicians offer critical domain expertise to interpret predictions and confirm alignment with clinical realities.

Transparent communication with healthcare stakeholders strengthens trust by clearly explaining how models generate predictions, their limitations and the ongoing monitoring that supports responsible use.

Translating analytics into clinical insight

The ultimate goal of medical AI is not simply to generate predictions but to support better clinical decision-making. Achieving this requires the translation of complex analytical outputs into insights that healthcare professionals can understand and act upon.

Effective visualization plays an important role in this process. Trend-line graphs can highlight patients who may require additional monitoring, while feature importance analyses reveal which factors are contributing most strongly to predicted outcomes. These tools help clinicians interpret AI-generated insights within the context of their existing clinical workflows.

When integrated effectively, advanced analytics can enhance clinical expertise rather than replace it. By analyzing complex datasets at scale, AI systems can highlight patterns that inform earlier interventions, support risk assessment and help healthcare organizations allocate resources more efficiently.

Building long-term trust in medical AI

As AI becomes more deeply embedded within healthcare systems, maintaining trust in these technologies will remain a central priority. Reliable AI systems require more than advanced algorithms; they depend on robust monitoring frameworks, transparent governance and ongoing collaboration between technical and clinical teams.

Organizations that adopt a lifecycle approach to AI development, combining sophisticated analytics with continuous evaluation, are better positioned to ensure that their systems remain safe, reliable and clinically meaningful over time.

By transforming complex healthcare data into actionable insights while maintaining rigorous oversight, medical AI has the potential to improve patient outcomes and support more effective healthcare delivery. Ensuring that these systems are continuously monitored and responsibly managed will be essential to realizing that potential.

The post Maintaining trust in medical AI: Monitoring and managing model lifecycle appeared first on MedTech Intelligence.